喜欢尝鲜的同学可能会注意到最新的kubernetes在执行kubectl get cs时输出内容有一些变化,以前是这样的:

1 2 3 4 5 > kubectl get componentstatuses NAME STATUS MESSAGE ERROR controller-manager Healthy ok scheduler Healthy ok etcd-0 Healthy {"health":"true"}

现在变成了:

1 2 3 4 5 > kubectl get componentstatuses NAME Age controller-manager <unknown> scheduler <unknown> etcd-0 <unknown>

起初可能会以为集群部署有问题,通过kubectl get cs -o yaml发现status、message等信息都有,只是没有打印出来。原来是kubectl get cs的输出格式有变化,那么为什么会有此变化,我们来一探究竟。

定位问题原因

尝试之前的版本,发现1.15还是正常的,调试代码发现对componentstatuses的打印代码在k8s.io/kubernetes/pkg/printers/internalversion/printers.go中,其中的AddHandlers方法负责把各种资源的打印函数注册进来。

1 2 3 4 5 6 7 8 9 10 11 12 func AddHandlers (h printers.PrintHandler) ...... componentStatusColumnDefinitions := []metav1beta1.TableColumnDefinition{ {Name: "Name" , Type: "string" , Format: "name" , Description: metav1.ObjectMeta{}.SwaggerDoc()["name" ]}, {Name: "Status" , Type: "string" , Description: "Status of the component conditions" }, {Name: "Message" , Type: "string" , Description: "Message of the component conditions" }, {Name: "Error" , Type: "string" , Description: "Error of the component conditions" }, } h.TableHandler(componentStatusColumnDefinitions, printComponentStatus) h.TableHandler(componentStatusColumnDefinitions, printComponentStatusList)

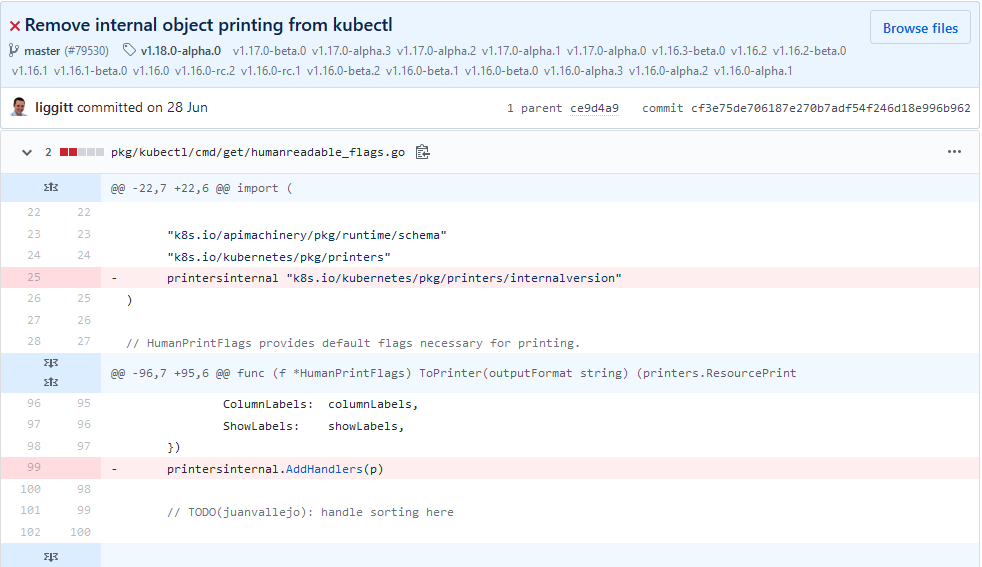

对AddHandlers的调用位于k8s.io/kubernetes/pkg/kubectl/cmd/get/humanreadable_flags.go(正在迁移到staging中,如果找不到就去staging中找)中,如下32行位置:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 func (f *HumanPrintFlags) ToPrinter (outputFormat string ) (printers.ResourcePrinter, error) if len (outputFormat) > 0 && outputFormat != "wide" { return nil , genericclioptions.NoCompatiblePrinterError{Options: f, AllowedFormats: f.AllowedFormats()} } showKind := false if f.ShowKind != nil { showKind = *f.ShowKind } showLabels := false if f.ShowLabels != nil { showLabels = *f.ShowLabels } columnLabels := []string {} if f.ColumnLabels != nil { columnLabels = *f.ColumnLabels } p := printers.NewTablePrinter(printers.PrintOptions{ Kind: f.Kind, WithKind: showKind, NoHeaders: f.NoHeaders, Wide: outputFormat == "wide" , WithNamespace: f.WithNamespace, ColumnLabels: columnLabels, ShowLabels: showLabels, }) printersinternal.AddHandlers(p) return p, nil }

查看humanreadable_flags.go文件的修改历史,发现是在2019.6.28日特意去掉了对内部对象的打印,影响版本从1.16之后。

为什么修改

我没有查到官方的说明,在此做一些个人猜测,还原整个过程:

最初对api resource的表格打印都是在kubectl中实现

这样对于其他客户端需要做重复的事情,而且可能实现的行为不一致,因此有必要将表格打印放到服务端,也就是apiserver中

服务端打印的支持经过一个逐步的过程,所以客户端并没有完全去除,是同时支持的,kubectl判断服务端返回的Table就直接打印,否则使用具体对象的打印,客户端和服务端对特定对象的打印都是调用k8s.io/kubernetes/pkg/printers/internalversion/printers.go来实现

1.11版本将kubectl的命令行参数--server-print默认设置为true

到了1.16版本,社区可能认为所有的对象都移到服务端了,这时就去除了客户端kubectl中的打印

但实际上componentstatuses被遗漏了,那么为什么遗漏,可能主要是因为componentstatuses对象跟其他对象不一样,它是每次实时获取,而不是从缓存获取,其他对象,例如pod是从etcd获取,对结果的格式化定义在k8s.io/kubernetes/pkg/registry/core/pod/storage/storage.go中,如下15行位置:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 func NewStorage (optsGetter generic.RESTOptionsGetter, k client.ConnectionInfoGetter, proxyTransport http.RoundTripper, podDisruptionBudgetClient policyclient.PodDisruptionBudgetsGetter) PodStorage store := &genericregistry.Store{ NewFunc: func () runtime .Object return &api.Pod{} }, NewListFunc: func () runtime .Object return &api.PodList{} }, PredicateFunc: pod.MatchPod, DefaultQualifiedResource: api.Resource("pods" ), CreateStrategy: pod.Strategy, UpdateStrategy: pod.Strategy, DeleteStrategy: pod.Strategy, ReturnDeletedObject: true , TableConvertor: printerstorage.TableConvertor{TableGenerator: printers.NewTableGenerator().With(printersinternal.AddHandlers)}, }

componentstatuses没有用到真正的Storge,而它又相对不起眼,所以被遗漏了。

暂时解决办法

如果希望打印和原来类似的内容,目前只有通过模板:

1 kubectl get cs -o=go-template='{{printf "|NAME|STATUS|MESSAGE|\n"}}{{range .items}}{{$name := .metadata.name}}{{range .conditions}}{{printf "|%s|%s|%s|\n" $name .status .message}}{{end}}{{end}}'

输出结果:

1 2 3 4 |NAME|STATUS|MESSAGE| |controller-manager|True|ok| |scheduler|True|ok| |etcd-0|True|{"health":"true"}|

深入打印处理流程

kubectl通过-o参数控制输出的格式,有yaml、json、模板和表格几种样式,上述问题是出在表格打印时,不加-o参数默认就是表格打印,下面我们详细分析一下kubectl get的打印输出过程。

kubectl

kubectl get的代码入口在k8s.io/kubernetes/pkg/kubectl/cmd/get/get.go中,Run字段就是命令执行方法:

1 2 3 4 5 6 7 8 9 func NewCmdGet (parent string , f cmdutil.Factory, streams genericclioptions.IOStreams) *cobra .Command ...... Run: func (cmd *cobra.Command, args []string ) cmdutil.CheckErr(o.Complete(f, cmd, args)) cmdutil.CheckErr(o.Validate(cmd)) cmdutil.CheckErr(o.Run(f, cmd, args)) }, ...... }

Complete方法完成了Printer初始化,位于k8s.io/kubernetes/pkg/kubectl/cmd/get/get_flags.go中:

1 2 3 4 5 6 7 func (f *PrintFlags) ToPrinter () (printers.ResourcePrinter, error) ...... if p, err := f.HumanReadableFlags.ToPrinter(outputFormat); !genericclioptions.IsNoCompatiblePrinterError(err) { return p, err } ...... }

不带-o参数时,上述方法返回的是f.HumanReadableFlags.ToPrinter(outputFormat),最后返回的是HumanReadablePrinter对象,位于k8s.io/cli-runtime/pkg/printers/tableprinter.go中:

1 2 3 4 5 6 7 func NewTablePrinter (options PrintOptions) ResourcePrinter printer := &HumanReadablePrinter{ options: options, } return printer }

再回到命令执行主流程,Complete之后主要是Run,其中完成向apiserver发送http请求并打印结果的动作,在发送http请求前,有一个很重要的动作,加入服务端打印的header,服务端打印可以通过--server-print参数控制,从1.11默认为true,这样服务端如果支持转换就会返回metav1beta1.Table类型,置为false也可以禁用服务端打印:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 func (o *GetOptions) transformRequests (req *rest.Request) ...... if !o.ServerPrint || !o.IsHumanReadablePrinter { return } group := metav1beta1.GroupName version := metav1beta1.SchemeGroupVersion.Version tableParam := fmt.Sprintf("application/json;as=Table;v=%s;g=%s, application/json" , version, group) req.SetHeader("Accept" , tableParam) ...... }

最后打印是调用的HumanReadablePrinter.PrintObj方法,先判断服务端如果返回的metav1beta1.Table类型就直接打印,其次如果是metav1.Status类型也有专门的处理器,最后就会到默认处理器:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 func (h *HumanReadablePrinter) PrintObj (obj runtime.Object, output io.Writer) error ...... if table, ok := obj.(*metav1beta1.Table); ok { return printTable(table, output, localOptions) } var handler *printHandler if _, isStatus := obj.(*metav1.Status); isStatus { handler = statusHandlerEntry } else { handler = defaultHandlerEntry } ...... if err := printRowsForHandlerEntry(output, handler, eventType, obj, h.options, includeHeaders); err != nil { return err } ...... return nil }

默认处理器会打印Name和Age两列,因为componetstatuses是实时获取,没有存储在etcd中,没有创建时间,所以Age打印出来就是unknown。

apiserver

再来看服务端的处理流程,apiserver对外提供REST接口实现在k8s.io/apiserver/pkg/endpoints/handlers目录下,kubectl get cs会进入get.go中ListResource方法,如下列出关键的三个步骤:

1 2 3 4 5 6 7 8 9 10 11 12 13 func ListResource (r rest.Lister, rw rest.Watcher, scope *RequestScope, forceWatch bool , minRequestTimeout time.Duration) http .HandlerFunc return func (w http.ResponseWriter, req *http.Request) ...... outputMediaType, _, err := negotiation.NegotiateOutputMediaType(req, scope.Serializer, scope) ...... result, err := r.List(ctx, &opts) ...... transformResponseObject(ctx, scope, trace, req, w, http.StatusOK, outputMediaType, result) ...... } }

NegotiateOutputMediaType中根据客户端的请求header设置服务端的一些行为,包括是否服务端打印;r.List从Storage层获取资源数据,具体实现在k8s.io/kubernetes/pkg/registry下;transformResponseObject将结果返回给客户端。

先说transformResponseObject,其中就会根据NegotiateOutputMediaType返回的outputMediaType的Convert字段判断是否转为换目标类型,如果为Table就会将r.List返回的具体资源类型转换为Table类型:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 func doTransformObject (ctx context.Context, obj runtime.Object, opts interface {}, mediaType negotiation.MediaTypeOptions, scope *RequestScope, req *http.Request) (runtime.Object, error) ...... switch target := mediaType.Convert; { case target == nil : return obj, nil ...... case target.Kind == "Table" : options, ok := opts.(*metav1beta1.TableOptions) if !ok { return nil , fmt.Errorf("unexpected TableOptions, got %T" , opts) } return asTable(ctx, obj, options, scope, target.GroupVersion()) ......g } }

上述asTable最终调用scope.TableConvertor.ConvertToTable完成表格转换工作,在本文的问题中,就是因为mediaType.Convert为空而没有触发这个转换,那么为什么为空呢,问题就出在NegotiateOutputMediaType,它最终会调用到k8s.io/apiserver/pkg/endpoints/handlers/rest.go的AllowsMediaTypeTransform方法,是因为scope.TableConvertor为空,最终转换为Table也是调用的它:

1 2 3 4 5 6 7 8 9 10 func (scope *RequestScope) AllowsMediaTypeTransform(mimeType, mimeSubType string, gvk *schema.GroupVersionKind) bool { ...... if gvk.GroupVersion() == metav1beta1.SchemeGroupVersion || gvk.GroupVersion() == metav1.SchemeGroupVersion { switch gvk.Kind { case "Table": return scope.TableConvertor != nil && mimeType == "application" && (mimeSubType == "json" || mimeSubType == "yaml") ...... }

进一步跟踪,RequestScope是在apiserver初始化的时候创建的,每类资源一个,比如componentstatuses有一个全局的,pod有一个全局的,初始化的过程如下:

apiserver初始化入口在k8s.io/kubernetes/pkg/master/master.go的InstallLegacyAPI和InstallAPIs方法中,前者主要针对一些老的资源,具体有哪些见下面的NewLegacyRESTStorage方法,其中就包含componentStatuses,其他资源通过InstallAPIs初始化:

1 2 3 4 5 func (m *Master) InstallLegacyAPI (c *completedConfig, restOptionsGetter generic.RESTOptionsGetter, legacyRESTStorageProvider corerest.LegacyRESTStorageProvider) error legacyRESTStorage, apiGroupInfo, err := legacyRESTStorageProvider.NewLegacyRESTStorage(restOptionsGetter) ...... }

初始化Storage:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 func (c LegacyRESTStorageProvider) NewLegacyRESTStorage(restOptionsGetter generic.RESTOptionsGetter) (LegacyRESTStorage, genericapiserver.APIGroupInfo, error) { apiGroupInfo := genericapiserver.APIGroupInfo{ PrioritizedVersions: legacyscheme.Scheme.PrioritizedVersionsForGroup(""), VersionedResourcesStorageMap: map[string]map[string]rest.Storage{}, Scheme: legacyscheme.Scheme, ParameterCodec: legacyscheme.ParameterCodec, NegotiatedSerializer: legacyscheme.Codecs, } ...... restStorageMap := map[string]rest.Storage{ "pods": podStorage.Pod, "pods/attach": podStorage.Attach, "pods/status": podStorage.Status, "pods/log": podStorage.Log, "pods/exec": podStorage.Exec, "pods/portforward": podStorage.PortForward, "pods/proxy": podStorage.Proxy, "pods/binding": podStorage.Binding, "bindings": podStorage.LegacyBinding, "podTemplates": podTemplateStorage, "replicationControllers": controllerStorage.Controller, "replicationControllers/status": controllerStorage.Status, "services": serviceRest, "services/proxy": serviceRestProxy, "services/status": serviceStatusStorage, "endpoints": endpointsStorage, "nodes": nodeStorage.Node, "nodes/status": nodeStorage.Status, "nodes/proxy": nodeStorage.Proxy, "events": eventStorage, "limitRanges": limitRangeStorage, "resourceQuotas": resourceQuotaStorage, "resourceQuotas/status": resourceQuotaStatusStorage, "namespaces": namespaceStorage, "namespaces/status": namespaceStatusStorage, "namespaces/finalize": namespaceFinalizeStorage, "secrets": secretStorage, "serviceAccounts": serviceAccountStorage, "persistentVolumes": persistentVolumeStorage, "persistentVolumes/status": persistentVolumeStatusStorage, "persistentVolumeClaims": persistentVolumeClaimStorage, "persistentVolumeClaims/status": persistentVolumeClaimStatusStorage, "configMaps": configMapStorage, "componentStatuses": componentstatus.NewStorage(componentStatusStorage{c.StorageFactory}.serversToValidate), } ...... apiGroupInfo.VersionedResourcesStorageMap["v1"] = restStorageMap return restStorage, apiGroupInfo, nil }

注册REST接口的handler,handler中包含RequestScope,RequestScope中的TableConvertor字段是从storage取出,也就是上述NewLegacyRESTStorage创建的资源对应的storage,例如componentStatuses,就是componentstatus.NewStorage(componentStatusStorage{c.StorageFactory}.serversToValidate),提取的方法是类型断言storage.(rest.TableConvertor),也就是storage要实现rest.TableConvertor接口,否则取出来为空:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 func (a *APIInstaller) registerResourceHandlers (path string , storage rest.Storage, ws *restful.WebService) (*metav1.APIResource, error) ...... tableProvider, _ := storage.(rest.TableConvertor) var apiResource metav1.APIResource ...... reqScope := handlers.RequestScope{ ...... TableConvertor: tableProvider, ...... for _, action := range actions { ...... switch action.Verb { ...... case "LIST" : doc := "list objects of kind " + kind if isSubresource { doc = "list " + subresource + " of objects of kind " + kind } handler := metrics.InstrumentRouteFunc(action.Verb, group, version, resource, subresource, requestScope, metrics.APIServerComponent, restfulListResource(lister, watcher, reqScope, false , a.minRequestTimeout)) ...... } } ...... }

具体看componentStatuses的storage,在k8s.io/kubernetes/pkg/registry/core/componentstatus/rest.go中,确实没有实现rest.TableConvertor接口,所以componentStatuses的handler的RequestScope中的TableConvertor字段就为空,最终导致了问题:

1 2 3 4 5 6 7 8 9 10 type REST struct { GetServersToValidate func () map [string ]*Server } // NewStorage returns a new REST . func NewStorage (serverRetriever func () map [string ]*Server ) *REST return &REST{ GetServersToValidate: serverRetriever, } }

代码修复

找到了根本原因之后,修复就比较简单了,就是storage需要实现rest.TableConvertor接口,接口定义在k8s.io/apiserver/pkg/registry/rest/rest.go中:

1 2 3 type TableConvertor interface { ConvertToTable(ctx context.Context, object runtime.Object, tableOptions runtime.Object) (*metav1beta1.Table, error) }

参照其他资源storage代码,修改k8s.io/kubernetes/pkg/registry/core/componentstatus/rest.go代码如下,问题得到解决,kubectl get cs打印出熟悉的表格,如果使用kubectl get cs --server-print=false仍会只打印Name、Age两列:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 type REST struct { GetServersToValidate func () map [string ]*Server tableConvertor printerstorage .TableConvertor } // NewStorage returns a new REST . func NewStorage (serverRetriever func () map [string ]*Server ) *REST return &REST{ GetServersToValidate: serverRetriever, tableConvertor: printerstorage.TableConvertor{TableGenerator: printers.NewTableGenerator().With(printersinternal.AddHandlers)}, } } func (r *REST) ConvertToTable (ctx context.Context, object runtime.Object, tableOptions runtime.Object) (*metav1beta1.Table, error) return r.tableConvertor.ConvertToTable(ctx, object, tableOptions) }

最后

本想提交一个PR给kubernetes,发现已经有人先一步提了 ,解决方法和我一摸一样,只是上述tableConvertor字段是大写开头,我觉得小写更好,有点遗憾。而这个问题在2019.9.23已经有人提出 ,也就是1.16刚发布的时候,9.24就有人提了PR,解决速度非常之快,可见开源软件的优势以及k8s热度之高,有无数的开发者为其贡献力量,k8s就像聚光灯下的明星,无数双眼睛注目着。不过这个PR现在还没有合入主干,还在代码审查阶段,这个bug相对来讲不是很严重,所以优先级不那么高,要知道现在还有1097个PR。虽然最后有一点小遗憾,不过在解决问题的过程中对kubernetes的理解也更进一步,还是收获良多。在阅读代码的过程中,随处可见各种TODO,发现代码不断在重构,今天代码在这里,明天代码就搬到另一个地方了,k8s这么一个冉冉升起的新星,虽然从2017年起就成为容器编排的事实标准,并被广泛应用到生产环境,但它本身还在不断进化,还需不断完善。